This content has moved to http://www.openanalysis.net/#training

This is the long annotated version of a short presentation I put together outlining the the crowdsource tools I have used in the past for malware triage.

In an enterprise environment you may fined yourself in a situation where you need to perform a malware triage but you simply don't have access to the tools you need (sometimes this the result of your GRC approvals lagging behind technology or you may simply be in the early stages of building your Incident Response program).

In these situations you will need to rely on online tools. You can perform most malware triage simply by using a notepad, web browser, and the internet.

Pro tip: Instead of using notepad.exe try using OneNote http://www.onenote.com/ or EverNote https://evernote.com/ and keep all of your notes from past triages. This will provide a central repository that you can search and use to provide insight into future malware .triage

The crowdsource tools we will look at are a mix of tools specifically aimed at Incident Responders (such as crowdsource intelligence offerings) and tools that are just useful during the triage process if we don't have a local equivalent handy.

It should be noted that even with a completely vanilla Windows 7 install and application whitelisting many of these tools can be created locally through the use of PowerShell. However, for the sake of this presentation we will try to accomplish all analysis with online tools.

Obviously by their very nature these tools do not support strong operational security practices! If you are trying to avoid tipping off an adversary that you are investigating them, don't use these tools.

Pro tip: If you don't have the local tools/lab you need and you are trying to analyze an APT you have already lost. This presentation is not for you.

The scenario we will use as our demo involves receiving an e-mail with a suspicious link in it. We want to triage that URL.

Pro tip: you will note that in the screen shot it appears as though we are drafting the suspicious e-mail not receiving it... maybe we are... maybe I was tired when I took the screen shot... maybe we should move on...

The triage workflow that we will be using to analyze the URL.

During the passive analysis phase we try to gather information about the URL without actually interacting with it. This is one of the areas that tools specific for Incident Responders have really improved in the past few years. There are tons of tools available, I've just listed the ones I use daily.

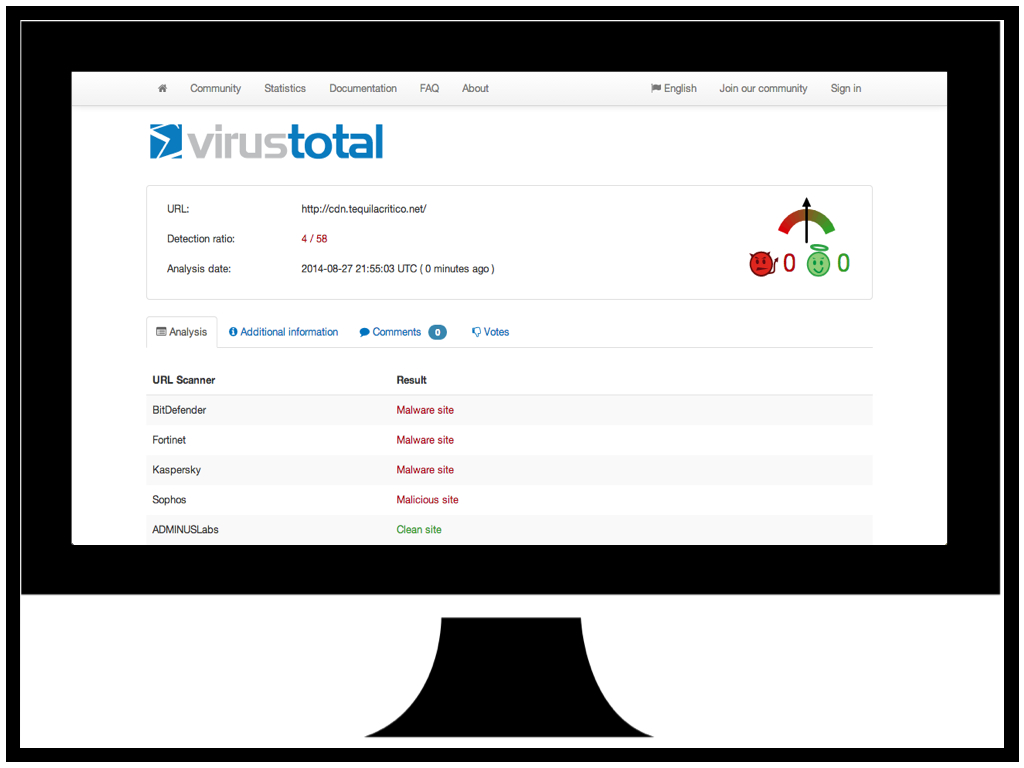

We are all familiar with https://www.virustotal.com/ so not much needs to be said here.

The URL we are triaging certainly looks malicious...

BlueCoat offer this great service https://sitereview.bluecoat.com/sitereview.jsp that will provide a "classification" for a domain you are interested in. In addition to classifying malicious domains they will also provide information on domains that are serving potentially unwanted software and adware.

Again BlueCoat confirms that the domain for the URL we are triaging appears to be malicious.

The https://www.passivetotal.org site is resource that allows researchers and other incident responders to "tag" domains with information such as the malware family they are associated with.

In this case the URL we are triaging has been tagged as "Crime" for crimeware and "Sweet Orange" possibly indicating that it leads to the Sweet Orange Exploit Kit.

The https://www.domaintools.com/ site has a suite of tools that can be used to identify the owners of domains, or group similar domains. This is a good place to start if you suspect a website has been compromised and you want to notify the owner.

In our case our URL doesn't have too much useful information but we can see that it is hosed on a shared hosting site. Possibly something to note for followup later.

Once we have gathered all the information we can from passive analysis it is time to interact with the URL.

Since we won't be using any local tools other than a web browser we will need some online tools to help us download and save a copy of the URL.

Since we won't be directly interacting with the URL using our web browser we will want to profile the user agent string so we can mirror it with our tools. Many exploit kits will deploy specific exploits based on the user agent string (and other browser features) so it is best to mimic the environment you are trying to protect. http://www.useragentstring.com/index.php is a great tool to determine what your user agent is.

Now that we are ready to interact with the URL we want to download a copy of the page with our first interaction. Many exploit kits have a "request limit" and will stop responding after 2 or 3 requests. This is to protect the EK from people like us : )

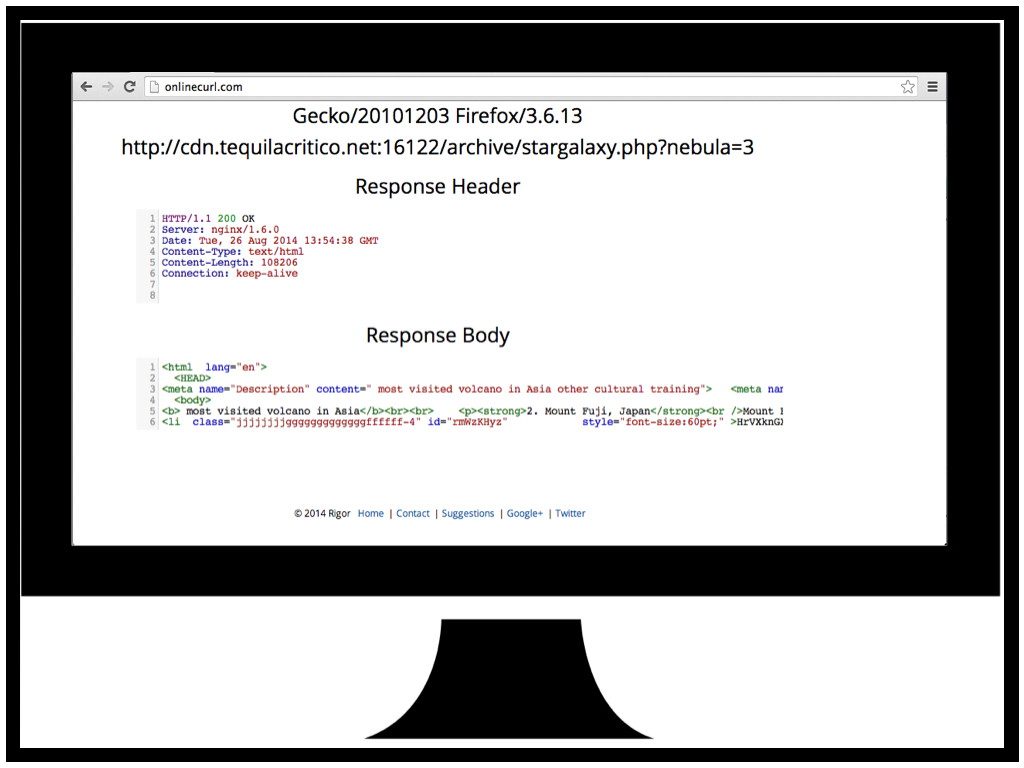

For this task we use http://onlinecurl.com/ an online version of the CURL tool everyone is familiar with. The online version has all the features of the cli version and supports options such as a custom user agent string.

We request our triage URL and we now we have a copy of the HTML code to analyze (more on this in a minute).

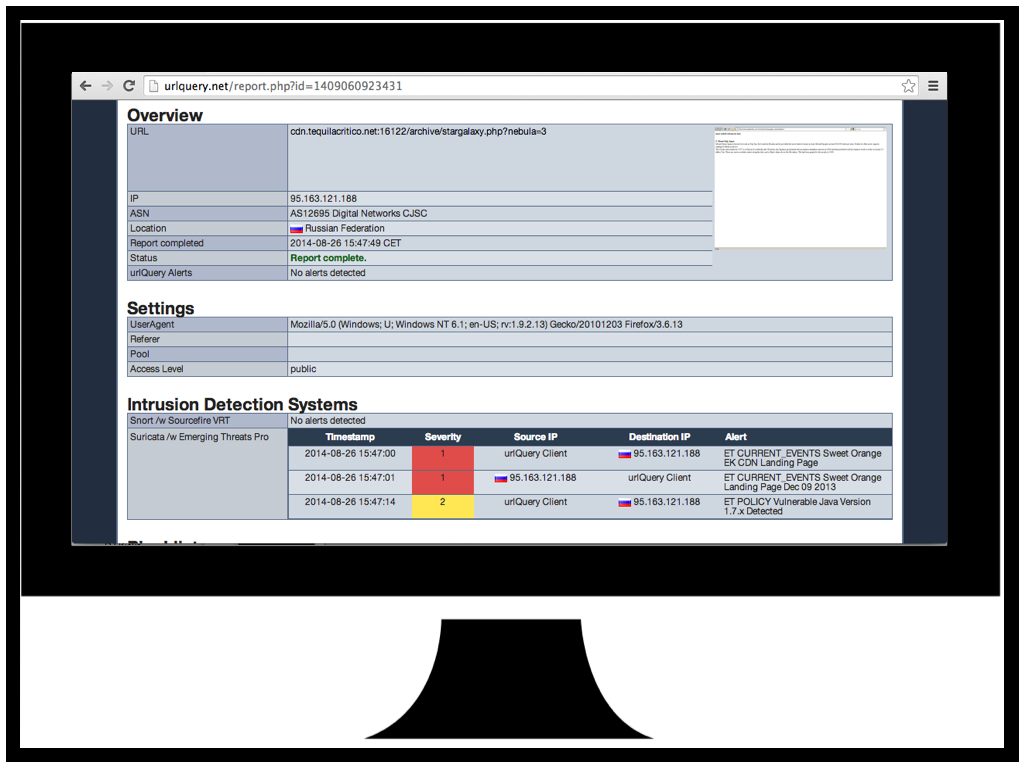

Now that we have a copy of the page and aren't worried about hitting the request limit we can try to analyze the URL with http://urlquery.net/. URLQuery is a browser sandbox that will retrieve the URL you want to analyze and run the request traffic past some IDS/IPS sensors. If the sensors detect any malicious traffic the alerts will be displayed.

I our case we can see that we have had a few IDS hits related to "Sweet Orange EK" confirming our earlier suspicion that this is the Sweet Orange Exploit Kit. We also have a hit for a vulnerable Java version check. Definitely something to keep in mind as we proceed.

Web component analysis is just fancy language for "read the HTML and JS". During this phase we just want to figure out what the page is doing. The tool you will use the most is your own understanding of HTML and Javascript.

The code for our URL is already starting to take shape. We can see there is an "<li" tag id=rmWzKHyz that looks like it has some encoded/encrypted data in it and we can see some Javascript functions that look like they may be user to decode/decrypt.

Now that it's time for us to take a closer look at the Javascript it may be tempting to just upload it to one of the many "javascript analysis sandboxes" that exists. In my experience these things never work for what we want. Keep in mind that we are truing to understand what the javascript is doing not just "is it bad".

In this case we can see that the Wepawet sandbox has identified our web page as benign when it clearly isn't.

For Javascript analysis I recommend finding an online JS interpreter instead of running the JS live in your browser. This will eliminate the risk of compromising your own workstation if you make a mistake. I prefer the http://math.chapman.edu/~jipsen/js/ online JS interpreter as it has no document object so if the JS is appending code to the document you will quickly identify this with an error.

To analyze our URL Javascript we copy it over to the JS interpreter and run it removing the final eval() statement. As we can see some new javascript is printed to the console... could this be the decrypted JS hidden in the "<li" tag?

Here I have just presented a different approach for those careless/brave enough to just run the JS in their own browser. Here we are using the Developer Tools native to Google Chrome to debug the JS.

If we copy that JS output back into jsbeautifier and clean it up we can now see something that looks very suspicious. There appears to be three different print statements and some javascript that is checking for plugins.

If we copy the contents of the print statements and beautify them we can see there are three different possible exploits loaded one flash and two java (we don't know they are exploits but we are plenty suspicious). For the purpose of this presentation I have chosen to analyze the second java one as it provides the best opportunity to showcase the most tools.

If we look at the second java one we can see that there are some parameters that appear to be obfuscated and there is a long string assigned to the jnlp_embedded tag. It's not apparent in this slide but at the end of the string there is "==" suggesting that it might be base64 encoded.

Since we don't have any local base64 decode tools we can use http://www.base64decode.org/ to decode the string.

Here we see that the string contains a reference to the Jar (OmXIIEr.jar) and the preloader class (WxIOiLd). We can now download the Jar and start analyzing the classes starting with the preloader.

Now that we know how the exploit is going to be delivered it's time to actually analyze the exploit.

We can download the Jar file with out web browser without any risk as it is benign without the web component to load it. Once downloaded we can just change the .jar extension to .zip and use native tools to unzip it (http://en.wikipedia.org/wiki/JAR_(file_format)).

Here we can see the jar contains a bunch of class files including the preloader class and a strange file with a .qvcw extension.

Pro tip: If for some reason you don't have a native unzip tool (maybe you are using a chrome book?) there are plenty of online zip tools http://b1.org/online.

We can also try uploading the jar to Virus Total to see what anti-virus thinks of it.

As always anti-virus working overtime with 2/55 but, one of those 2 hits gives us CVE-2013-2460. Now we have a pretty good idea what exploit is. If you want to stop here you can but I always suggest following through an verifying that the AV guys got it right. We all remember how many false positives they had on CVE-2014-1761, you would have been exposed to lots of risk if you trusted the AV signatures for that...

If you aren't familiar with the great work from Dan Guido and the Exploit Intelligence Project go check it out https://www.isecpartners.com/media/12955/eip-final.pdf. The project analyzed all major exploit kits in 2009-2010 and identified the origins of the exploits they were using. It turned out that none of them used 0-day, they all relied on exploits that had been discovered by white hats or were in published analysis of APT campaigns.

Pro tip: You have a very very high chance of finding the exploit published online if you are triaging crimeware. I like to look in the Metasploit github https://github.com/rapid7/metasploit-framework/tree/master/modules/exploits. Once you have found the exploit you are looking for you don't actually need to reverse the malware you just need to do some code comparison. This is the secret to quick triage.

For our triage we have found CVE-2013-2460 in the Metasploit github. Now all we need to do is look at the jar javacode and see if they match.

In order to decompile the jar class files we can use http://www.showmycode.com/ an online java and flash decompiler.

Here we have decompiled the preloader class and we can see that the java code is heavily obfuscated.

The best way to de-obfuscate java code is to run it with a debugger and print statements. This requires a bit of understand of Java but it's fairly straight forward. I like to use http://ideone.com/ an online IDE, compiler, and debugger all in one, no local Java tools required.

Here we are decoding encoded strings in the Java code and printing them to stdout.

Once we have decoded all the strings in the Java code we substitute them back into the code and here we have some code that very closely resembles part of the Metasploit CVE-2013-2460.

But what about that strange file with the .qvcw extension? Here we see it loaded as a string along with the string "555546DZD2A1FD2992".

If we open the .qvcw in notepad we can see that it is a txt file with the 5555 string repeated in it a lot.

Let's use a find/replace on the 5555 string and bingo we have a serialized class.

If we deserialize the class and decompile it we are left with the other part of the Metasploit CVE-2013-2460 exploit (the part that disables the sandbox).

Pro tip: if Virus Total had not provided a CVE for us to look for we would have analyzed the exploit code as we have here but once we got to this point we would have used some of the strings from this class in Google to try and match the exploit. The reasons we use strings from this class is it has been serialized without being obfuscated so there is a good chance that it is copied code, or at lease a better chance that the order of some of the code will match a blog post or git commit.

If you are in the unfortunate position that your organization does have exposure to the exploit (perhaps you can't patch Java due to some legacy application) you will want to analyze the payload that is delivered by this exploit so you can better inform the risk function of your security program and/or sweep your enterprise for indicators.

Here we are decoding the strings that provide the URL to download the payload. Since the payload is a PE it can safely be downloaded directly with your web browser.

For the payload analysis we aren't going to go deep into malware reversing all we want to do is understand our coverage in terms of AV, identify the malware family/goals, and get some indicators in case we need to sweep our enterprise for compromises.

Here we have the AV vendors doing a spectacular job 5/55 detections and no clear indication what this malware is.

Virus Total also comes with a build in sandbox that will provide some high level indicators.

The traffic captures from the Virus Total sandbox are particularly useful for identifying the malware family using Google.

Based on these traffic samples we were able to identify this payload as Qakbot.

Hands down the best tool for analyzing binary malware is https://malwr.com/ an online sandbox. Unfortunately they recently ran out of resourced and had to temporarily stop accepting submissions. They promise to be up and running again soon.

Other online sandboxes tend to leave something to desire. They either don't work or they are overloaded. For a long list see http://zeltser.com/reverse-malware/automated-malware-analysis.html

You can also try uploading the sample to http://totalhash.com/. TotalHash will provide you with it's own set of indicators from a sandbox run which are nice to compare with Virus Total but they will also make your sample hash searchable so that malware researchers can use it to identify groups of similar malware. It's a nice way to give back to the community and you may get some extra info.

Finally now that we have identified the malware as a variant of the Qakbot family we can go to https://www.iocbucket.com/ and search to see if there are any OpenIOCs available for the malware. IOC Bucket is a initiative to help share malware IOCs within the IR and research community.

At the time of this presentation there were no IOCs for Qakbot. If you create an IOC be sure to share it : )

Finally, most of these tools would not be possible without community involvement. If you use these tools try to give back. Even leaving comments on Virus Total helps.

No comments:

Post a Comment